Précis - Tying It All Together

Great work this week! Thank you for sharing your insights and great collaborative work in our cloud computing activities, for sharing your experiences using the cloud, and providing excellent feedback as well.

Recap

We began the week by exploring and defining the cloud, and looking the pros and cons of cloud-based applications, including the benefits of ease of access through web-enabled devices, and lower initial acquisition costs, and the key concern of privacy, especially in the fields of education, medicine and finance.

Then, we looked at an overview of the market for public cloud services, and found that firms are turning to cloud-based systems for in order to take advantage of cloud-computing’s flexibility and scalability, and most of all, to gain efficiencies including simplifying and reducing IT management, infrastructure and cost.

Next, we looked at professional development in the medical profession and the use of cloud based Continuing Education (CE) solutions, as well as mobile apps. We discovered that cloud solutions for healthcare require (through legislation) far more security in order to protect the personal information of stakeholders. We also looked at a few examples of how the cloud is used in medical and dental schools, with instructors at these schools noting that collaboration with peers in school cloud environments help prepare them for the collaborative professional environment they will soon enter.

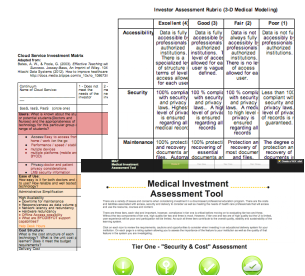

With our background cloud-based medical professional development, we embarked on a collaborative task to design an investor assessment tool for cloud based learning solutions on behalf of a medical institution that is implementing improvements to their professional education programs.

Great work this week! Thank you for sharing your insights and great collaborative work in our cloud computing activities, for sharing your experiences using the cloud, and providing excellent feedback as well.

Recap

We began the week by exploring and defining the cloud, and looking the pros and cons of cloud-based applications, including the benefits of ease of access through web-enabled devices, and lower initial acquisition costs, and the key concern of privacy, especially in the fields of education, medicine and finance.

Then, we looked at an overview of the market for public cloud services, and found that firms are turning to cloud-based systems for in order to take advantage of cloud-computing’s flexibility and scalability, and most of all, to gain efficiencies including simplifying and reducing IT management, infrastructure and cost.

Next, we looked at professional development in the medical profession and the use of cloud based Continuing Education (CE) solutions, as well as mobile apps. We discovered that cloud solutions for healthcare require (through legislation) far more security in order to protect the personal information of stakeholders. We also looked at a few examples of how the cloud is used in medical and dental schools, with instructors at these schools noting that collaboration with peers in school cloud environments help prepare them for the collaborative professional environment they will soon enter.

With our background cloud-based medical professional development, we embarked on a collaborative task to design an investor assessment tool for cloud based learning solutions on behalf of a medical institution that is implementing improvements to their professional education programs.

Discussion

Despite some initial hiccups with some new collaboration spaces, groups have presented assessment tools that provide excellent evaluation of potential cloud solutions. Most groups created an assessment rubric based on a four or five point scale, because it is a simple and easy to use assessment tool that most of us as educators were familiar with. Most groups began by deciding upon the most important categories to assess, then filled in the specifics for each category.

It was interesting to observe and how each group approached the collaborative task. While some groups decided upon a general framework for evaluating cloud-based solutions, some were markedly more specialized towards the medical field. A couple groups further concentrated their assessment tools to a particular need such as 3D medical modelling. All groups identified a number of important factors to consider when evaluating a cloud provider. Chief among nearly all groups was security. The groups emphasized a need for robust security measures to prevent unauthorized access to institution and patient data. Another common area for consideration was usability, as groups cited a need for an easy to use, intuitive interface that may also allow for customization. Cost was the third major area for consideration, as groups created rubrics to evaluate both the upfront and continuing maintenance and migration costs of possible cloud solutions. Other common areas for consideration included functionality and scalability/flexibility, as well as accessibility/availability, and functionality. Overall, we were impressed with the thought and depth, and breadth of analysis in many of the assessment tools.

Reflection

We hoped to provide an engaging and informative week on cloud computing with a focus on professional development in the health care field. Our goal was to give participants a different experience from Week 5’s Cloud-based OER, and in true constructivist nature, we decided to build upon this prior knowledge for our OER on Cloud computing. By engaging participants in an unfamiliar area such as the medical field, we also hoped it would be a refreshing change that may spark novel ideas.

We sought to provide a simple, clean and easy to navigate experience for participants, and towards this end, we decided to situate the entire OER on the Weebly site. We trust the introductory video was helpful. In prior weeks, we found that moving between sites created a choppy, disorienting experience.

Because one of cloud computing’s strengths is in online collaboration, we believed that a group task would provide more meaningful engagement. Keeping participants in their assigned groups seemed to be the optimal way to go, as it should have helped things get going quickly. We thought that having the groups working in different cloud environments was a brilliant idea, although we may have dropped the ball in execution. We chose these particular cloud platforms because we felt they were some of the most intuitive to use. We wanted people to spend their time collaborating on the task rather than trying to figure out how it worked. However, we found that groups struggled with the limitations of some of these cloud platforms. In hindsight, we should have spent more time testing out each platform to find the best ones for our particular task. However, there was no obligation to use the assigned tool exclusively. Groups were free to use other means of communication/collaboration such as Skype, email, google docs etc. In the future, we would like to give groups a variety of platforms to chose from in the hopes that they will still try something new, but will also better fit the group’s needs. Thanks once again to everyone for their hard work this week!

Despite some initial hiccups with some new collaboration spaces, groups have presented assessment tools that provide excellent evaluation of potential cloud solutions. Most groups created an assessment rubric based on a four or five point scale, because it is a simple and easy to use assessment tool that most of us as educators were familiar with. Most groups began by deciding upon the most important categories to assess, then filled in the specifics for each category.

It was interesting to observe and how each group approached the collaborative task. While some groups decided upon a general framework for evaluating cloud-based solutions, some were markedly more specialized towards the medical field. A couple groups further concentrated their assessment tools to a particular need such as 3D medical modelling. All groups identified a number of important factors to consider when evaluating a cloud provider. Chief among nearly all groups was security. The groups emphasized a need for robust security measures to prevent unauthorized access to institution and patient data. Another common area for consideration was usability, as groups cited a need for an easy to use, intuitive interface that may also allow for customization. Cost was the third major area for consideration, as groups created rubrics to evaluate both the upfront and continuing maintenance and migration costs of possible cloud solutions. Other common areas for consideration included functionality and scalability/flexibility, as well as accessibility/availability, and functionality. Overall, we were impressed with the thought and depth, and breadth of analysis in many of the assessment tools.

Reflection

We hoped to provide an engaging and informative week on cloud computing with a focus on professional development in the health care field. Our goal was to give participants a different experience from Week 5’s Cloud-based OER, and in true constructivist nature, we decided to build upon this prior knowledge for our OER on Cloud computing. By engaging participants in an unfamiliar area such as the medical field, we also hoped it would be a refreshing change that may spark novel ideas.

We sought to provide a simple, clean and easy to navigate experience for participants, and towards this end, we decided to situate the entire OER on the Weebly site. We trust the introductory video was helpful. In prior weeks, we found that moving between sites created a choppy, disorienting experience.

Because one of cloud computing’s strengths is in online collaboration, we believed that a group task would provide more meaningful engagement. Keeping participants in their assigned groups seemed to be the optimal way to go, as it should have helped things get going quickly. We thought that having the groups working in different cloud environments was a brilliant idea, although we may have dropped the ball in execution. We chose these particular cloud platforms because we felt they were some of the most intuitive to use. We wanted people to spend their time collaborating on the task rather than trying to figure out how it worked. However, we found that groups struggled with the limitations of some of these cloud platforms. In hindsight, we should have spent more time testing out each platform to find the best ones for our particular task. However, there was no obligation to use the assigned tool exclusively. Groups were free to use other means of communication/collaboration such as Skype, email, google docs etc. In the future, we would like to give groups a variety of platforms to chose from in the hopes that they will still try something new, but will also better fit the group’s needs. Thanks once again to everyone for their hard work this week!

Survey Results

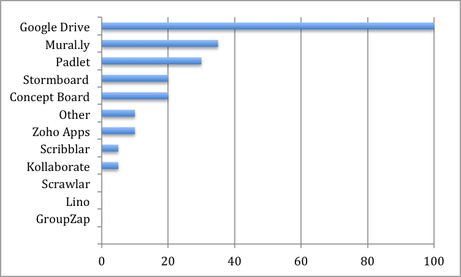

Other: Cacoo, Basecamp, Asana, Marqueed, GoVisually

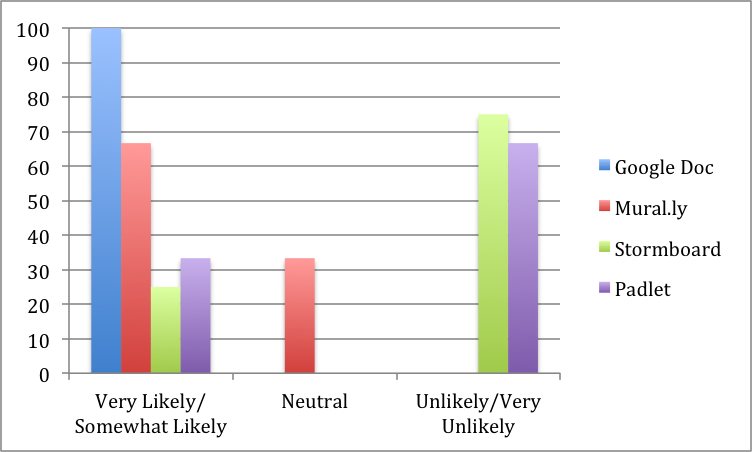

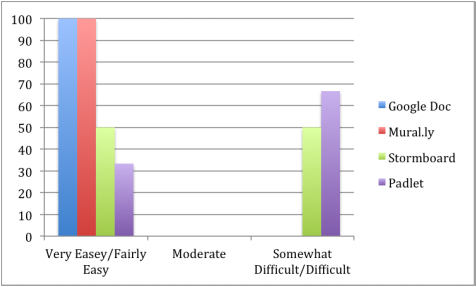

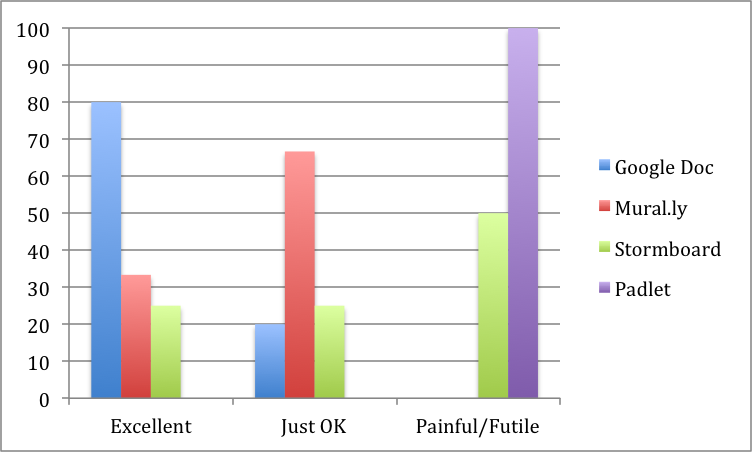

It is clear from the results that Google Drive has been universally embraced as the cloud space to beat in terms of ease of use, future likelihood of use, and overall experience, with 100% of respondents having used it in the past.

Padlet and Stormboard, on the other had, achieved nearly universal condemnation for their limitations during our OER activity. Despite the overwhelming disapproval, users noted past successes with these cloud spaces in tasks that better took advantage of their unique strengths.

Some interesting observations not evident in the above charts:

Generally, those who have not used any cloud collaboration spaces other than Google Drive in the past were more critical of other cloud collaboration spaces than those who have past experience with other cloud spaces. Those that have used a variety of other cloud spaces in the past gave generally more positive reviews of their assigned cloud space.

It’s interesting to note the continued success and ubiquity of Google Docs in this market. All respondents had used it, and it was clearly the most preferred solution for online collaboration during this OER. There are however, many other innovative and worthwhile alternatives to cloud collaboration, each offering unique features that make collaborating online with others easier and more productive in certain situations. It was a secondary goal of ours to encourage participants to try other cloud spaces, and we hope you will seek some of those out for future use in your own contexts.

Padlet and Stormboard, on the other had, achieved nearly universal condemnation for their limitations during our OER activity. Despite the overwhelming disapproval, users noted past successes with these cloud spaces in tasks that better took advantage of their unique strengths.

Some interesting observations not evident in the above charts:

Generally, those who have not used any cloud collaboration spaces other than Google Drive in the past were more critical of other cloud collaboration spaces than those who have past experience with other cloud spaces. Those that have used a variety of other cloud spaces in the past gave generally more positive reviews of their assigned cloud space.

It’s interesting to note the continued success and ubiquity of Google Docs in this market. All respondents had used it, and it was clearly the most preferred solution for online collaboration during this OER. There are however, many other innovative and worthwhile alternatives to cloud collaboration, each offering unique features that make collaborating online with others easier and more productive in certain situations. It was a secondary goal of ours to encourage participants to try other cloud spaces, and we hope you will seek some of those out for future use in your own contexts.